Can VGA Carry 4K Signal?

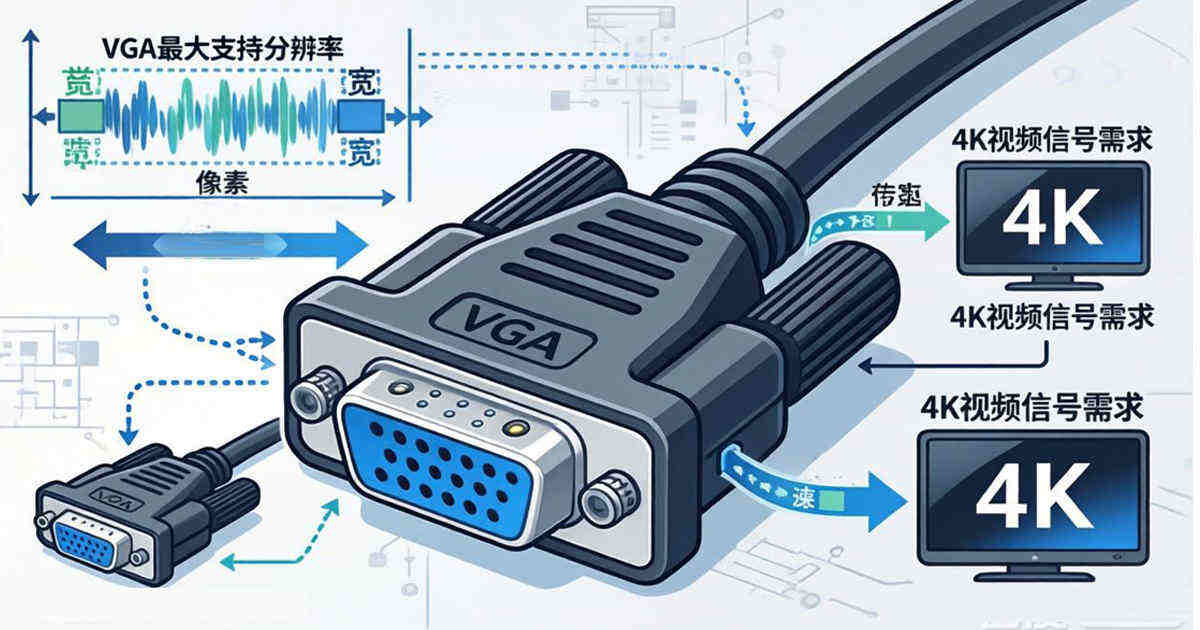

For decades, the Video Graphics Array (VGA) connector was the universal standard for connecting computers to displays. Even today, in an era dominated by HDMI and DisplayPort, millions of legacy VGA ports remain in operation across conference rooms, educational institutions, and industrial settings. As 4K UHD becomes the baseline for visual clarity, a critical question emerges: Can VGA carry a 4K signal?

The short answer is no—not in any usable or compliant way. While an analog signal like VGA does not have a "hard cap" in the same way a digital packet does, the physical limitations of analog bandwidth, signal degradation, and industry standards restrict VGA to a maximum practical resolution of roughly 1920x1080 (1080p) or 1920x1200 (WUXGA). Attempting to push 4K through VGA results in a complete loss of image detail, extreme blurring, and sync failures.

This article dives deep into the engineering data, compares real-world specifications, and explains precisely why the analog legacy cannot support the digital UHD future.

Summary

VGA cannot carry a true 4K signal due to its analog nature and limited bandwidth (approximately 400 MHz pixel clock equivalent). While VGA cables technically transmit a continuous waveform that could theoretically scale, physical signal-to-noise ratios (SNR) and thermal noise restrict it to a maximum resolution of 1920x1080 at 60Hz. Professional testing equipment confirms that VGA outputs max out at 2048x1080, while 4K requires digital interfaces like HDMI 2.0 or DisplayPort to handle the 18 Gbps data rate necessary for 3840x2160 resolution.

Covered Keywords

VGA 4K bandwidth, analog video limitations, 3840x2160 analog signal, VGA maximum resolution, HDMI vs VGA bandwidth, pixel clock rate, signal degradation, 4K UHD compatibility.

1. The Bandwidth Bottleneck: Analog vs. Digital

To understand why VGA fails with 4K, you must look at how the signal is transmitted. VGA is an analog protocol. It works by varying voltage levels continuously to represent brightness and color. HDMI, conversely, is a digital protocol that sends discrete packets of 1s and 0s.

The Data Disparity

A 4K signal (3840 x 2160) at 60Hz with standard color depth requires a massive amount of data.

- VGA (Analog): It does not have a "data rate" in Mbps or Gbps. Instead, it has a pixel clock (the rate at which pixels are drawn). High-quality VGA DACs (Digital-to-Analog Converters) max out around 400 MHz. However, signal integrity drops drastically after 300 MHz.

- HDMI 2.0 (Digital): Operates at a fixed data rate of 18 Gbps (6 Gbps per lane) .

To put it in perspective: 4K @ 60Hz requires roughly 18 Gbps of throughput. The analog bandwidth required to represent a 4K signal is so high that the cable acts as an antenna, picking up electromagnetic interference (EMI) that destroys the signal integrity.

2. The "Soft Limit": Why 1080p is the Realistic Maximum

While you might find a GPU driver that allows you to select 3840x2160 on a VGA output, the physical reality is that you are not actually getting a 4K image.

Industry data shows that VGA is strictly limited to HD (High Definition) or lower resolutions. According to technical specifications from display manufacturers and testing equipment vendors, the maximum resolution supported by VGA is 2048 x 1080, and this is often at reduced refresh rates or blanking intervals .

Real-World Performance Data

Professional AV testing equipment, such as the NTI MONTEST-HD4K and Kramer 860, are used to certify signal quality. Their specifications draw a hard line in the sand:

| Signal Type | Max Resolution Supported | Total Bandwidth | Signal Integrity |

|---|---|---|---|

| VGA | 1920x1080 @ 60Hz (or 2048x1080) | ~388 MHz Pixel Clock | Analog (Susceptible to Noise) |

| HDMI | 3840x2160 @ 60Hz (4K) | 18 Gbps / 600 MHz TMDS | Digital (Error-Corrected) |

The MONTEST-HD4K generator explicitly lists VGA output support stopping at 1920x1200 and 2048x1080, while its HDMI output simultaneously pushes 4096x2160 at 60Hz . This is not a cable quality issue; it is a fundamental protocol limitation.

3. The Physics of Analog: Noise, Crosstalk, and Attenuation

Even if you had a theoretical "perfect" VGA cable, physics would still prevent 4K. VGA splits the image into five separate signals: Red, Green, Blue, Horizontal Sync, and Vertical Sync.

As resolution increases, the time to draw each pixel decreases. At 4K, the voltage changes required are so fast that they begin to blur together. This is called rise time degradation.

Furthermore, because VGA is analog, it is susceptible to thermal noise (random electron movement). When you try to push the pixel clock past 300 MHz, the signal-to-noise ratio (SNR) collapses. The result is "ghosting" (where reflections of pixels appear to the right of the actual pixel) and a complete loss of fine text detail. For 4K to be effective, you need pixel-perfect accuracy. VGA offers "close enough," which is insufficient for UHD.

4. The Digital Conversion Trap (VGA to 4K Adapters)

You might find "VGA to 4K adapters" online. It is crucial to understand that these are not actually transmitting 4K over VGA.

These adapters are active upscalers. They take a low-resolution analog signal (usually 1080p or 720p) from the VGA source, convert it to digital, and then artificially stretch (upscale) the picture to fit a 4K screen. You are not getting native 4K resolution. You are getting a 1080p image displayed on a 4K grid.

If your source device only has VGA (e.g., an older laptop), you cannot get native 4K output. The source's RAMDAC (Random Access Memory Digital-to-Analog Converter) physically cannot generate the analog voltage patterns required for 4K.

5. Comparative Standards: What you need for 4K

To achieve true 4K UHD (3840x2160p), you must use a digital standard. Here is how the common interfaces stack up according to industry specifications:

- HDMI 1.4: Supports 4K, but only at 24Hz or 30Hz (Not suitable for general computing or gaming).

- HDMI 2.0/2.1: Supports 4K at 60Hz (2.0) and 120Hz (2.1) with HDR. This is the current standard.

- DisplayPort (DP): Designed for computers. Handles 4K effortlessly and is the preferred standard for high-refresh-rate monitors.

- VGA: Unsupported. Limited to HD (1920x1080) or lower .

6. The Legacy Exception: Why Projectors still have VGA

You will still find VGA ports on many modern projectors. This is purely for backward compatibility with legacy laptops. If you plug a 4K source into a projector via HDMI, the projector will display 4K. If you plug that same source into the VGA port, the projector's internal scaler will down-sample the signal to 1080p or lower before displaying it. You are losing 75% of your resolution data.

Frequently Asked Questions (FAQ)

Q1: Can VGA do 4K at a very low refresh rate, like 24Hz? A: Technically, lowering the refresh rate lowers the required pixel clock. In controlled laboratory settings, some equipment can sync a 4K signal over VGA at 15-20Hz. However, this results in visible flicker, makes mouse movement impossible, and is not supported by standard operating systems or drivers. For practical purposes, it does not work .

Q2: Is a gold-plated or expensive VGA cable able to carry 4K? A: No. While high-quality cables reduce signal loss (attenuation), they cannot overcome the bandwidth limit of the VGA controller (DAC) on your graphics card. Even a $200 VGA cable is physically incapable of transmitting the 18 Gbps equivalent data required for 4K. At best, it maintains 1080p quality over longer distances .

Q3: My GPU software lets me select 3840x2160 on VGA. Why? A: The GPU is rendering the desktop at 4K internally (using your CPU/GPU power) but then downsampling the image to 1080p before converting it to analog. You are essentially viewing a 4K image that has been squished into a 1080p signal. It acts as a crude form of anti-aliasing, but you are not seeing true 4K pixel detail .

Q4: Why does my 4K monitor look blurry when I use VGA? A: Because the monitor is receiving a 1080p (or lower) analog signal. The monitor's scaler is then forced to guess the colors of the missing 6 million pixels to fill the 4K screen. This "stretching" always results in a soft, blurry image compared to a native digital connection .

Q5: Does VGA support audio? A: No. VGA is video-only. If you use a VGA cable, you will require a separate 3.5mm audio cable for sound. HDMI carries both .

Q6: What is the max distance for a VGA cable at 1080p? A: Because VGA is analog and voltage-sensitive, the maximum reliable distance is about 15 meters (50 feet). Beyond that, the image becomes dim and ghosted. 4K would fail at less than 1 meter due to the required bandwidth .

Conclusion

While VGA was a robust standard that served the industry for three decades, it is physically incapable of supporting 4K UHD. The analog noise floor, limited pixel clock rates (sub-400 MHz), and industry specifications restrict VGA to a maximum resolution of 1920x1080. Attempting to force 4K results in a loss of sync or an unusably blurry image.

If you need to view 4K content, you must utilize a digital connection: HDMI 2.0, DisplayPort, or USB-C (with DisplayPort Alt Mode) . Do not let the presence of a blue VGA port fool you; for high-definition video, it is a relic that belongs in the museum of computing history, not in a 4K workflow.